By Etrit Demaj

On this page

Sign up to our newsletter

Subscribe to receive the latest blog posts to your inbox every week.

By subscribing you agree to with our Privacy Policy.

Originally published on Mann Report

New energy and utility mandates now affect over 50 jurisdictions across the United States. And that number is growing.

Rapidly evolving regulation across the United States is putting increasing pressure on enterprise portfolios to provide accurate sustainability reporting, identify where cost efficiencies are generated and ensure data transparency.

Staying compliant is one of the most challenging operational hurdles that these portfolios face as they scale, especially if they comprise hundreds of assets spread over thousands of miles.

Today, they face a very basic challenge that threatens compliance: the tools they use to manage and report utility data are no longer useful.

Old Tools Causing Problems

Utility operations teams at large real estate firms are increasingly facing a daily struggle to manage their data. The software most companies use was designed for a simpler time, where portfolio size and tech integration were commensurate.

Data is being pulled manually from a fragmented constellation of utility portals that each require a separate login. Bills arrive as PDFs, processed by hand or through basic optical character recognition (OCR), then copied into spreadsheets for reconciliation.

Tenant consumption data is generated via email chains or secure file transfer protocol (SFTP) drops. Accounting platforms like SAP, Oracle or property management systems such as Yardi and MRI — built for financial management, not utility intelligence — are being stretched to fill gaps they were never designed to cover.

Without an integrated system of record, the consequences extend well beyond operations. From the CFO to finance teams or asset managers, it’s more likely that rough estimations at budget time will be made, billing errors and duplicate charges go undetected for months, and forecasting becomes an exercise in educated guesswork.

This unreliability has a direct line to capital allocation decisions and investor reporting. The result is a workflow built on patchwork methodology rather than architecture.

Data lives in a dozen disconnected places and pulling it together is itself a time-consuming task before sharing it with third parties, let alone beginning to analyze it.

And the problem isn’t always a lack of data. Operators managing multi-site portfolios consistently report the opposite: plenty of data, scattered everywhere with no reliable mechanism to consolidate it, and as a result, report quickly or accurately.

Why Scattered Data is Blocking AI

Using outdated, outmoded software couldn’t happen at a more critical juncture as artificial intelligence (AI) begins to fundamentally reshape how operators in our industry do everything from forecasting energy use, flagging billing errors or mapping out carbon-reduction measures realistically and transparently.

Globally, however, there has been an eagerness to jump onto the AI bandwagon without having the requisite data foundations for these systems to work properly.

According to Salesforce’s State of Data & Analytics report, 84% of data and analytics leaders believe that their strategies need to be overhauled for effective AI implementation; systems need clean, organized inputs — operating or utility data — from all stakeholders, otherwise that AI cannot access it to process it.

But accessibility alone is not enough; data from different systems also needs to speak the same language.

This shared ontology — or common data structure — creates a normalization layer for the system to draw meaningful conclusions from.

To start, you have to build tools to scrape this data, verify it and feed it back into a unified data system. Only then will operators see the benefits of AI-powered tools fully.

Rebuilding Your Data Management Foundations with AI

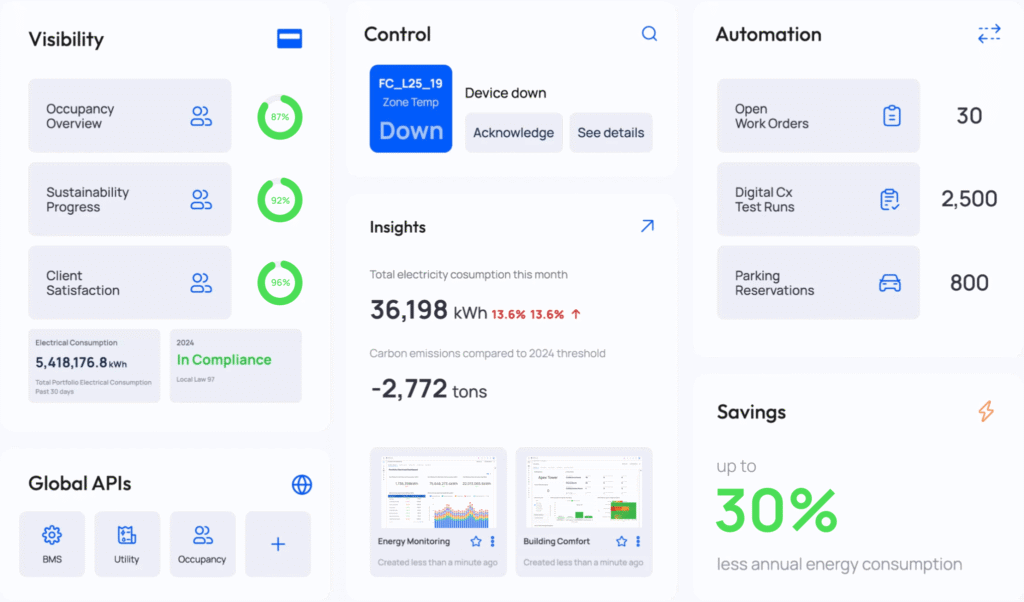

Alongside a compliance imperative, real estate companies must upgrade to a unified intelligence layer to enable AI-driven forecasting that drives sharper financial decision-making, and offers greater portfolio-wide visibility — while also satisfying investor expectations and the compliance demands, which come with operating at scale.

This is why KODE Labs launched EnerG AI-Powered Utility Intelligence.

EnerG replaces manual spreadsheets and multiple disconnected portals with one centralized system. It organizes utility data so teams can easily use AI to improve communicating daily operational performance in real time, between all departments.

By placing data integrity at its core, platforms such as EnerG eliminate manual utility data collection and validation; offer portfolio-wide visibility of all performance metrics (energy, water, waste, carbon, cost); enable alignment of sustainability targets with budgets, capital planning and efficiency initiatives and deliver audit-ready reporting for regulators.

Meeting the Regulatory Deadline

For real estate organizations to succeed in this rapidly changing landscape, there needs to be a fundamental mindset shift in how we view and value data management.

Utility data, when properly unified and activated, is one of the most powerful strategic assets a company can have. It can enable real estate owners and operators to decrease costs, enhance equipment performance and lifespan and increase ROI.

The companies that seize this opportunity over the next five years will take data management from being viewed as a back-office task to a central part of the executive conversation.

And they will be rewarded handsomely for that foresight. For those that don’t have it, the world leaves you behind entirely.